It happened. Tesla's first fatal autopilot crash. On the contrary. Semi-automatic actually. Better yet, autopilot that allows for guidance semiautonomous. And on this last word there is everything the disinformation that the media from all over the world have mounted on the story in recent days. You all read it: A Tesla customer crashed into a truck while Autopilot was active.

It is a fact that all the media have talked about. In Spain, all the newspapers - but really all of them - went wild, often speaking with extreme approximation of an extremely serious story. Serious both because a person died, and because the technology of the near future is discussed - with superficiality and ignorance -.

What is certainly astonishing, in my opinion, that I have noticed within my network of contacts, is how popular the Tesla Autopilot and the whole story has become. I've seen it read, shared and commented on by so many people that I thought remotely could do it. This is undoubtedly positive, as it demonstrates how certain themes and attentions are less and less niche.

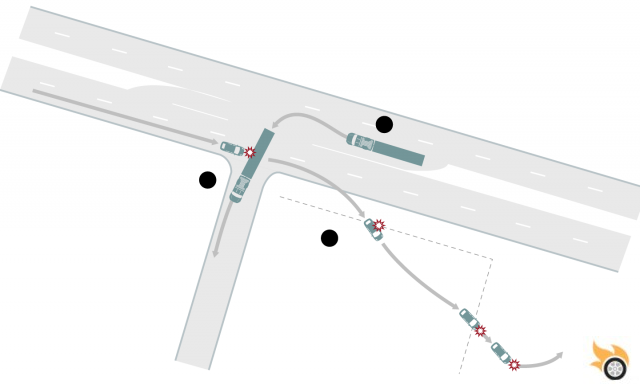

How did the facts goJoshua Brown, 40, was driving his black Model S in Williston, North Florida. He was on Route 27, a highway. As the following image from the Florida Highway Patrol, also used by the New York Times, shows, the truck veered left onto a perpendicular. The Tesla Autopilot, not recognizing the truck, did not brake thus causing the impact. Being the trailer high off the ground, the Model S managed to pass under it, destroying the entire upper part of the passenger compartment and then crashing into some fences and some light poles, stopping in a field. The reconstruction made by Tesla and the Police explains that the semi-autonomous driving system of the car did not see the truck due to its excessive ground clearance (rare feature) and the white color of the trailer which was not distinguished from the very clear sky of that moment. In fact, not only didn't autopilot see the truck, it didn't even see Joshua Brown, the driver, so much so that the brake has not been touched.

One witness reported being overtaken by the Tesla while traveling at 136 mph on a road with a much lower speed limit. Obviously the autopilot must be able to react at any speed, so speed is actually a factor that has nothing to do with what seems to have been a shadow cone of the semi-autonomous driving system. However, it is difficult not to think that a lower speed could have given the story a much less serious epilogue (always assuming this infraction is confirmed).

The media reactionWith topics such as electric mobility, Tesla, automatic piloting and semi-autonomous and autonomous driving increasingly covered and in vogue, speaking and spreading the alert of this fatal accident for journalists was like dripping fat. One scoop. Everyone has talked about it: from the major national media to individual small local newspapers. Even those who had never dealt with this topic before (just take advantage of media sites to do research).

In very few texts the real responsibility and the necessary premises are explained. Very few journalists have explained the difference between autonomous and semi-autonomous driving, which completely shifts responsibility and gives a completely different meaning to the story. On the contrary, an avalanche of approximate articles that have triggered, in the many readers, critical comments full of vulgar populism.

Let's see, therefore, to reposition the compass needle according to the real information.

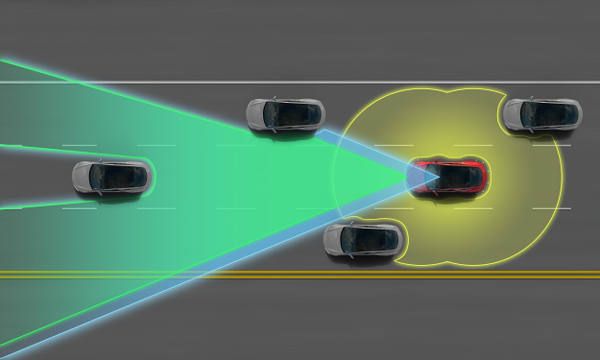

Tesla AutopilotTesla Autopilot is a advanced software system which combines the results of 4 hardware technologies: the radar, ultrasound, cameras and GPS. On a practical level, autopilot allows the car to always be centered within its lane. It also lets you change lanes with a tap of the arrow and maintain your distance, as well as your speed, based on traffic. With regard to safety, the brakes and steering are possibly activated and controlled precisely to avoid impacts or going off the road. Finally, the car is able to park itself once it has found the right space to do so.

The autopilot, once activated, WARN the driver to keep their hands on the wheel at all times and to keep attention high so as to be able to intervene at any time. In advance, the car constantly checks that the driver is awake and active, asking to touch the steering wheel to confirm his presence. Otherwise, it emits audio-visual signals.

To give the right weight, I want to underline the concept: the autopilot WARN always keep your hands on the wheel.

From the Tesla note entitled "A tragic loss", post-accident, we read in fact:

“It is important to note that Tesla disables Autopilot by default and requires explicit acknowledgement that the system is new technology and still in a public beta phase before it can be enabled. When drivers activate Autopilot, the acknowledgment box explains, among other things, that Autopilot “is an assist feature that requires you to keep your hands on the steering wheel at all times,” and that “you need to maintain control and responsibility for your vehicle” while using it. Additionally, every time that Autopilot is engaged, the car reminds the driver to “Always keep your hands on the wheel. Be prepared to take over at any time.” The system also makes frequent checks to ensure that the driver’s hands remain on the wheel and provides visual and audible alerts if hands-on is not detected. It then gradually slows down the car until hands-on is detected again.”

Autopilot is in Beta and is a semi-autonomous driving technologyAs mentioned, it is a particularly advanced system ma in beta. In beta means in testing phase, to be used with certain precautions and limits to accept, also known.

Additionally, whether Autopilot is in beta or not, it is a semi-autonomous driving system. This, among all Tesla customers is known it's noto

From this consideration, two paths open up:

- All the articles and discussions that have opened up about Tesla Autopilot stem from ignorance. Alias: not knowing that the Tesla autopilot is a semi-autonomous driving system, moreover in Beta, the journalists embarked on an easy, but decidedly misleading, fox hunt.

- On the contrary, this information is also known to journalists but deliberately talked badly about it (some strong and positive references to other brands, within the same articles, could make us think even more).

In my personal opinion, we are in hypothesis n°1 with some exceptions for point n°2.

Semi-autonomous driving is supportiveThe correctness of the words make the difference. Always. Even more so in this case, where Tesla is being blamed for a fault that, in reality, he does not have. And it's not about being biased. All the information that I report in this article is as elementary as it is irrefutable.

The guide semiAutonomous, which Tesla Autopilot allows, is driving where technology is only supportive. Helpful. Help. It is an addition, not a replacement. This means that the driver must always be vigilant, paying close attention. Don't do it, with a technology known to be in Beta version and on which Tesla supplies i fair warnings, is unconscious.

The goal of semi-autonomous and autonomous driving is to reduce accidents, collisions and deaths. The computer, with a well thought-out algorithm behind it, is objectively able to respond with very high speed to any event it is identified as such. The semi-autonomous, in particular, always requires that man is able to intervene. An identical behavior to when driving is therefore required. Indeed, it should be seen the other way around: with the autopilot you have to drive as you would if it weren't there. This is the only way to ensure maximum efficiency combined man-machine. Because it is a combination and if one of the two fails, safety is considerably reduced, up to sad cases like these. In the opposite situations, in fact, the statistics show a good decrease in accidents and related deaths.

Was the autopilot wrong?Let's get to the point. The Tesla autopilot has always been labeled as particularly safe, moreover in line with the numerous safety awards that have always been attributed to Tesla. However, a fact like the one described is enough, but above all thousands of erroneous and superficial articles, to give a completely distorted view of reality.

So the question is: with reference to this case, Is the Tesla Autopilot wrong or not? According to all the information we have, the answer is Yes, he was wrong. And, mind you, this cannot be disputed. Not in this case, not ever. Technology, being built and programmed by humans, it inherits its ability to fail. And this must be the starting point.

Whose responsibility is it?The discussion, therefore, is not about the fact that something, from a technological point of view, has not worked correctly. The point is completely another: figure out who to blame of the death of Joshua Brown.

Considering that:

- it is widely contemplated that the autopilot can fail

- the driver is still required to keep his hands on the steering wheel

- the system is semi-autonomous driving, not autonomous

- the system is in Beta version

It is clear that the error was human. The consequences of any malfunctioning of the automatic pilot are in any case subject to the supervision and driving of the driver. Such supervision, in this case, does not seem to have taken place. Autopilot, which is in Beta, should not and cannot be used as if it is not. Actually, as previously written, even if it weren't in Beta there would be no difference. As safe as it may be declared, it remains a "assistance" technology.

In fact, in semi-autonomous driving, man and machine are two active entities with the same goal. In the event that one fails to act, for whatever reason, the other steps in.

In any case, dutifully in these situations and as declared by the US National Highway Traffic Safety Administration, an investigation has been opened to further investigate the unfolding of the facts and the functioning of the autopilot.

The driver had already avoided an accident thanks to the automatic pilotJoshua Brown, on April 5 (one month before the fatal impact) had already avoided a collision thanks to the intervention of the autopilot. Impossible not to personally consider all this particular, given that I had used Josha Brown's video in one of my speeches as a speaker at an event where I spoke about Tesla and autopilot.

What could Tesla do?Given that on a legal level Tesla seems very little attackable, I thought about what could be done to avoid unpleasant situations like this in the future. I think that an even more explicit and repeated line of communication, can decisively convey to the many Tesla customers the risks of behavior that is not in line with the policies and the already precise recommendations.

Other than that, I think little else can be done.

The conclusionsAfter so much noise, it is good to restore rationality, looking ahead. Autopilot is the technology of the future and was created to reduce and avoid accidents. It's a near future. This episode helps bring the most important thing back into focus: awareness.

- The awareness that technology requires evolution over time to become mature (for example, plane crashes in the 60s and 70s were many more than today).

- The awareness of restoring the importance of the human. The car is not a toy and the autopilot is an abstract figure that is difficult to blame, pointing the finger.

We are the "masters", and responsible, of the technology. Not vice versa.

For the rest, it is impossible not to marry the hope of Joshua's family. Their lawyers have made it known that the family is actively collaborating in the investigation, as they hope that all the information learned from this tragedy will be useful for increasing road safety.

What's your opinion? Comment in the comments section, below.

my2cents